HQ Team

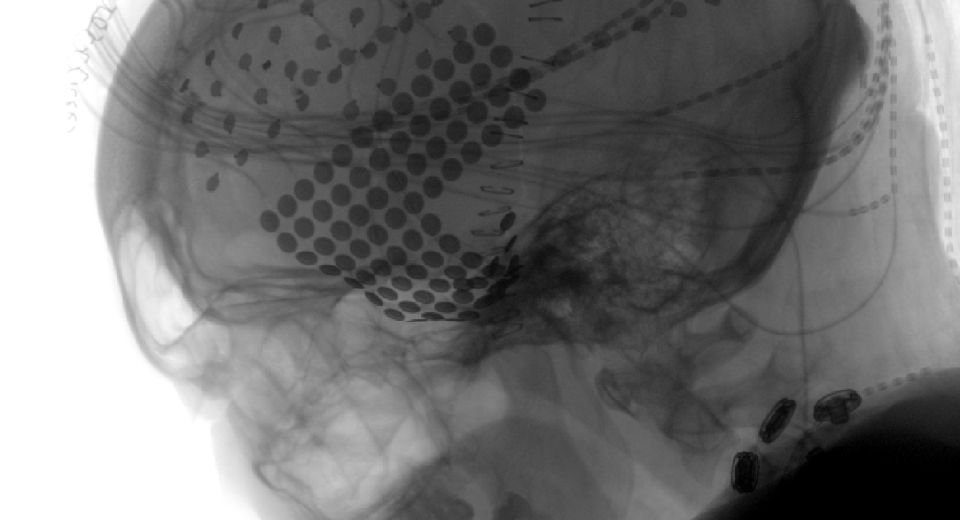

August 17, 2023: Neuroscientists at the University of California, Berkeley, used AI software to reconstruct a song from the brain recordings, adding another brick in the wall of human understanding of processing music.

This is the first time a song has been reconstructed from intracranial electroencephalography recordings and may help people who have trouble communicating due to stroke or paralysis.

The recordings from electrodes on the brain surface could help reproduce the musicality of speech that’s missing from today’s robot-like reconstructions, the researchers wrote in Plos Biology journal.

Berkeley researchers wanted to capture the electrical activity of brain regions tuned to attributes of the music — tone, rhythm, harmony, and words — to see if they could reconstruct what the patient was hearing.

Neuroscientists at Albany Medical Center diligently recorded the activity of electrodes placed on the brains of patients being prepared for epilepsy surgery for more than a decade.

Another Brick in the Wall

After a detailed analysis of data from 29 such patients by Berkeley neuroscientists, the answer was in the positive in capturing electrical activity.

Patients were played the chords of an approximately 3-minute Pink Floyd song, Another Brick in the Wall, Part 1, which is from the 1979 album The Wall.

Robert Knight, a Berkeley professor of psychology at the Helen Wills Neuroscience Institute conducted the study.

With the help of his postdoctoral fellow Ludovic Bellier, he hoped to go beyond previous studies, which had tested whether decoding models could identify different musical pieces and genres, to actually reconstruct music phrases through regression-based decoding models.

The phrase “All in all it was just a brick in the wall” came through recognizably in the reconstructed song, its rhythms intact, and the words muddy, but decipherable.

This is the first time researchers have reconstructed a recognizable song from brain recordings.

Musical elements of speech

The reconstruction shows the feasibility of recording and translating brain waves to capture the musical elements of speech, as well as the syllables.

In humans, these musical elements, called prosody — rhythm, stress, accent, and intonation — carry meaning that the words alone do not convey.

“One of the things for me about music is it has prosody and emotional content,” Mr Knight said.

“As this whole field of brain-machine interfaces progresses, this gives you a way to add musicality to future brain implants for people who need it, someone who’s got ALS or some other disabling neurological or developmental disorder compromising speech output.”

“It gives you the ability to decode not only the linguistic content but some of the prosodic content of speech, some of the effect. I think that’s what we’ve really begun to crack the code on,” he said.

Scalp electrodes

As brain recording techniques improve, it may be possible someday to make such recordings without opening the brain, perhaps using sensitive electrodes attached to the scalp.

Scalp EEG can measure brain activity to detect an individual letter from a stream of letters, but the approach takes at least 20 seconds to identify a single letter, making communication effortful and difficult.

“Let’s hope, for patients, that in the future we could, from just electrodes placed outside on the skull, read activity from deeper regions of the brain with good signal quality. But we are far from there,” Mr Bellier said.

Eddie Chang, a UC San Francisco neurosurgeon and senior co-author of a 2012 paper, has recorded signals from the motor area of the brain associated with jaw, lip, and tongue movements to reconstruct the speech intended by a paralyzed patient, with the words displayed on a computer screen.

That work, reported in 2021, employed artificial intelligence to interpret the brain recordings from a patient trying to vocalize a sentence based on a set of 50 words.

Auditory cortices

While Chang’s technique is proving successful, the new study suggests that recording from the auditory regions of the brain, where all aspects of sound are processed, can capture other aspects of speech that are important in human communication.

“Decoding from the auditory cortices, which are closer to the acoustics of the sounds, as opposed to the motor cortex, which is closer to the movements that are done to generate the acoustics of speech, is super promising,” Mr Bellier said.

“It will give a little color to what’s decoded.”

The researchers also confirmed that the right side of the brain is more attuned to music than the left side. “Language is more left brain. Music is more distributed, with a bias toward right,” Mr Knight said.

Mr Knight is embarking on new research to understand the brain circuits that allow some people with aphasia due to stroke or brain damage to communicate by singing when they cannot otherwise find the words to express themselves.